5. A particle, initially at rest, moves along the x-axis such that the acceleration at time t>0 is given by a(t)=-sin (t) . At the time t=0 ,the position is x=5 |(a) Find the velocity and position functions of the particle. |(b) For what values of time t is the particle at rest?

5. A particle, initially at rest, moves along the x-axis such that the acceleration at time t>0 is given by a(t)=-sin (t) . At the time t=0 ,the position is x=5 |(a) Find the velocity and position functions of the particle. |(b) For what values of time t is the particle at rest?

Calculus: Early Transcendentals

8th Edition

ISBN:9781285741550

Author:James Stewart

Publisher:James Stewart

Chapter1: Functions And Models

Section: Chapter Questions

Problem 1RCC: (a) What is a function? What are its domain and range? (b) What is the graph of a function? (c) How...

Related questions

Question

100%

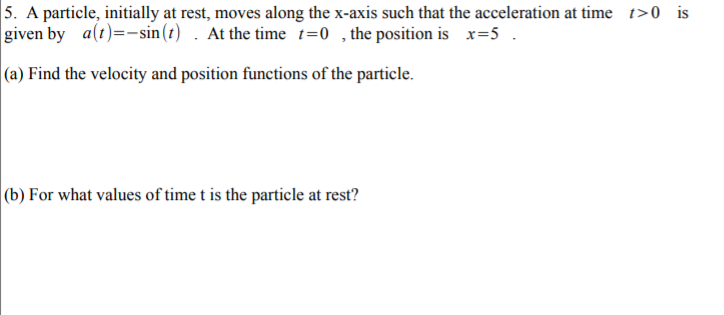

Transcribed Image Text:5. A particle, initially at rest, moves along the x-axis such that the acceleration at time t>0 is

given by a(t)=-sin (t) . At the time t=0 ,the position is x=5

|(a) Find the velocity and position functions of the particle.

|(b) For what values of time t is the particle at rest?

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

This is a popular solution!

Trending now

This is a popular solution!

Step by step

Solved in 3 steps with 3 images

Recommended textbooks for you

Calculus: Early Transcendentals

Calculus

ISBN:

9781285741550

Author:

James Stewart

Publisher:

Cengage Learning

Thomas' Calculus (14th Edition)

Calculus

ISBN:

9780134438986

Author:

Joel R. Hass, Christopher E. Heil, Maurice D. Weir

Publisher:

PEARSON

Calculus: Early Transcendentals (3rd Edition)

Calculus

ISBN:

9780134763644

Author:

William L. Briggs, Lyle Cochran, Bernard Gillett, Eric Schulz

Publisher:

PEARSON

Calculus: Early Transcendentals

Calculus

ISBN:

9781285741550

Author:

James Stewart

Publisher:

Cengage Learning

Thomas' Calculus (14th Edition)

Calculus

ISBN:

9780134438986

Author:

Joel R. Hass, Christopher E. Heil, Maurice D. Weir

Publisher:

PEARSON

Calculus: Early Transcendentals (3rd Edition)

Calculus

ISBN:

9780134763644

Author:

William L. Briggs, Lyle Cochran, Bernard Gillett, Eric Schulz

Publisher:

PEARSON

Calculus: Early Transcendentals

Calculus

ISBN:

9781319050740

Author:

Jon Rogawski, Colin Adams, Robert Franzosa

Publisher:

W. H. Freeman

Calculus: Early Transcendental Functions

Calculus

ISBN:

9781337552516

Author:

Ron Larson, Bruce H. Edwards

Publisher:

Cengage Learning