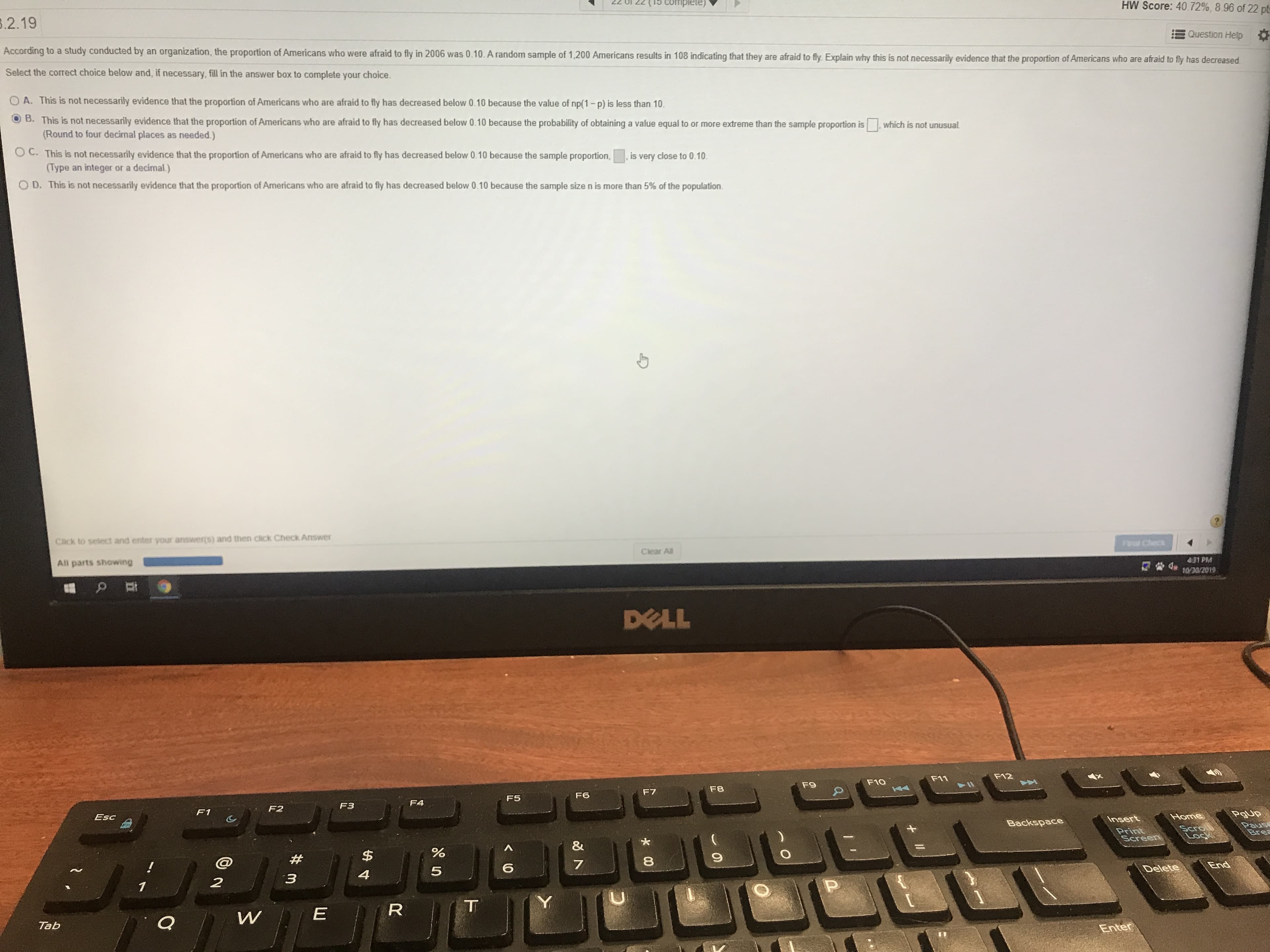

HW Score: 40.72%, 8.96 of 22 pt Complete) .2.19 Question Help According to a study conducted by an organization, the proportion of Americans who were afraid to fly in 2006 was 0.10. A random sample of 1,200 Americans results in 108 indicating that they are afraid to fly. Explain why this is not necessarily evidence that the proportion of Americans who are afraid to fly has decreased Select the correct choice below and, if necessary, fill in the answer box to complete your choice A. This is not necessarily evidence that the proportion of Americans who are afraid to fly has decreased below 0.10 because the value of np(1-p) is less than 10 B. This is not necessarily evidence that the proportion of Americans who are afraid to fly has decreased below 0.10 because the probability of obtaining a value equal to or more extreme than the sample proportion is (Round to four decimal places as needed.) which is not unusual O C. This is not necessarily evidence that the proportion of Americans who are afraid to fly has decreased below 0.10 because the sample proportion, (Type an integer or a decimal) , is very close to 0.10 OD. This is not necessarily evidence that the proportion of Americans who are afraid to fly has decreased below 0.10 because the sample sizen is more than 5% of the population Click to select and enter your answer(s) and then click Check Answer F Check Clear All 431 PM All parts showing 10/30/2019 DELL F12 F11 F10 F9 F8 F7 F6 F5 F4 F3 F2 F1 PgUp Home Esc Insert Backspace Paus Bre Scrol Lock Print Screen & % $ End 6 Delete 5 4 3 2 1 Y T EN W Q Tab Enter 00 0 R

Unitary Method

The word “unitary” comes from the word “unit”, which means a single and complete entity. In this method, we find the value of a unit product from the given number of products, and then we solve for the other number of products.

Speed, Time, and Distance

Imagine you and 3 of your friends are planning to go to the playground at 6 in the evening. Your house is one mile away from the playground and one of your friends named Jim must start at 5 pm to reach the playground by walk. The other two friends are 3 miles away.

Profit and Loss

The amount earned or lost on the sale of one or more items is referred to as the profit or loss on that item.

Units and Measurements

Measurements and comparisons are the foundation of science and engineering. We, therefore, need rules that tell us how things are measured and compared. For these measurements and comparisons, we perform certain experiments, and we will need the experiments to set up the devices.

Help

Trending now

This is a popular solution!

Step by step

Solved in 4 steps with 3 images