A source has an alphabet {aj, a2, az, as} with corresponding probabilities (0.1, 0.2, 0,5, 0.2). 1. Find the entropy of the source. 2. What is the minimum required average code word length to represent this source for error-free reconstruction? 3. Design a Huffman code for the source and compare the average length of the Huffman code with the entropy of the source. 4. Design a Huffman code for the second extension of the source (take two letters at a time). What is the average code word length? What is the average required binary letters per each source output letter? 5. Which one is a more efficient coding scheme, Huffman coding of the original source or Huffman coding of the second extension of the source?

A source has an alphabet {aj, a2, az, as} with corresponding probabilities (0.1, 0.2, 0,5, 0.2). 1. Find the entropy of the source. 2. What is the minimum required average code word length to represent this source for error-free reconstruction? 3. Design a Huffman code for the source and compare the average length of the Huffman code with the entropy of the source. 4. Design a Huffman code for the second extension of the source (take two letters at a time). What is the average code word length? What is the average required binary letters per each source output letter? 5. Which one is a more efficient coding scheme, Huffman coding of the original source or Huffman coding of the second extension of the source?

Computer Networking: A Top-Down Approach (7th Edition)

7th Edition

ISBN:9780133594140

Author:James Kurose, Keith Ross

Publisher:James Kurose, Keith Ross

Chapter1: Computer Networks And The Internet

Section: Chapter Questions

Problem R1RQ: What is the difference between a host and an end system? List several different types of end...

Related questions

Question

4 and 5. parts

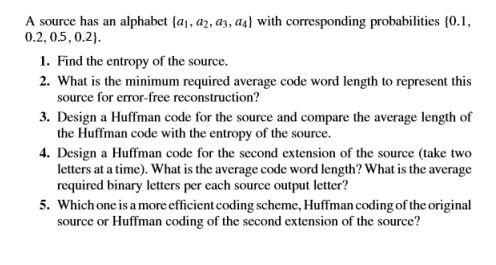

Transcribed Image Text:A source has an alphabet {a, a2, az, as} with corresponding probabilities {0.1,

0.2, 0.5, 0.2).

1. Find the entropy of the source.

2. What is the minimum required average code word length to represent this

source for error-free reconstruction?

3. Design a Huffman code for the source and compare the average length of

the Huffman code with the entropy of the source.

4. Design a Huffman code for the second extension of the source (take two

letters at a time). What is the average code word length? What is the average

required binary letters per each source output letter?

5. Which one is a more efficient coding scheme, Huffman coding of the original

source or Huffman coding of the second extension of the source?

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

Step by step

Solved in 2 steps

Recommended textbooks for you

Computer Networking: A Top-Down Approach (7th Edi…

Computer Engineering

ISBN:

9780133594140

Author:

James Kurose, Keith Ross

Publisher:

PEARSON

Computer Organization and Design MIPS Edition, Fi…

Computer Engineering

ISBN:

9780124077263

Author:

David A. Patterson, John L. Hennessy

Publisher:

Elsevier Science

Network+ Guide to Networks (MindTap Course List)

Computer Engineering

ISBN:

9781337569330

Author:

Jill West, Tamara Dean, Jean Andrews

Publisher:

Cengage Learning

Computer Networking: A Top-Down Approach (7th Edi…

Computer Engineering

ISBN:

9780133594140

Author:

James Kurose, Keith Ross

Publisher:

PEARSON

Computer Organization and Design MIPS Edition, Fi…

Computer Engineering

ISBN:

9780124077263

Author:

David A. Patterson, John L. Hennessy

Publisher:

Elsevier Science

Network+ Guide to Networks (MindTap Course List)

Computer Engineering

ISBN:

9781337569330

Author:

Jill West, Tamara Dean, Jean Andrews

Publisher:

Cengage Learning

Concepts of Database Management

Computer Engineering

ISBN:

9781337093422

Author:

Joy L. Starks, Philip J. Pratt, Mary Z. Last

Publisher:

Cengage Learning

Prelude to Programming

Computer Engineering

ISBN:

9780133750423

Author:

VENIT, Stewart

Publisher:

Pearson Education

Sc Business Data Communications and Networking, T…

Computer Engineering

ISBN:

9781119368830

Author:

FITZGERALD

Publisher:

WILEY