The transition matrix of a Markov chain is .3 .6 .1 P=.4 .6 .2 .2 .6 On the first observation the Markov chain is in state 2. What is the probability that on both of the following two observations it will be in state 1?

The transition matrix of a Markov chain is .3 .6 .1 P=.4 .6 .2 .2 .6 On the first observation the Markov chain is in state 2. What is the probability that on both of the following two observations it will be in state 1?

Linear Algebra: A Modern Introduction

4th Edition

ISBN:9781285463247

Author:David Poole

Publisher:David Poole

Chapter3: Matrices

Section3.7: Applications

Problem 3EQ: In Exercises 1-4, let P=[0.50.30.50.7] be the transition matrix for a Markov chain with two states....

Related questions

Question

100%

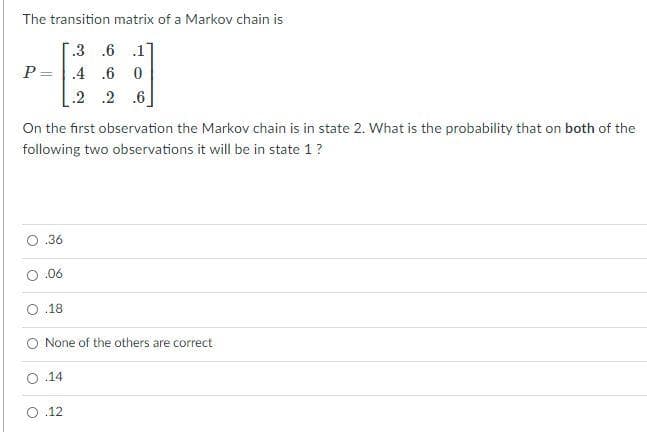

Transcribed Image Text:The transition matrix of a Markov chain is

.3 .6 .1

P=.4 .6 0

.2 .2 .6

On the first observation the Markov chain is in state 2. What is the probability that on both of the

following two observations it will be in state 1?

O.36

O.06

.18

None of the others are correct

.14

O.12

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

Step by step

Solved in 2 steps with 2 images

Knowledge Booster

Learn more about

Need a deep-dive on the concept behind this application? Look no further. Learn more about this topic, calculus and related others by exploring similar questions and additional content below.Recommended textbooks for you

Linear Algebra: A Modern Introduction

Algebra

ISBN:

9781285463247

Author:

David Poole

Publisher:

Cengage Learning

Elementary Linear Algebra (MindTap Course List)

Algebra

ISBN:

9781305658004

Author:

Ron Larson

Publisher:

Cengage Learning

Linear Algebra: A Modern Introduction

Algebra

ISBN:

9781285463247

Author:

David Poole

Publisher:

Cengage Learning

Elementary Linear Algebra (MindTap Course List)

Algebra

ISBN:

9781305658004

Author:

Ron Larson

Publisher:

Cengage Learning