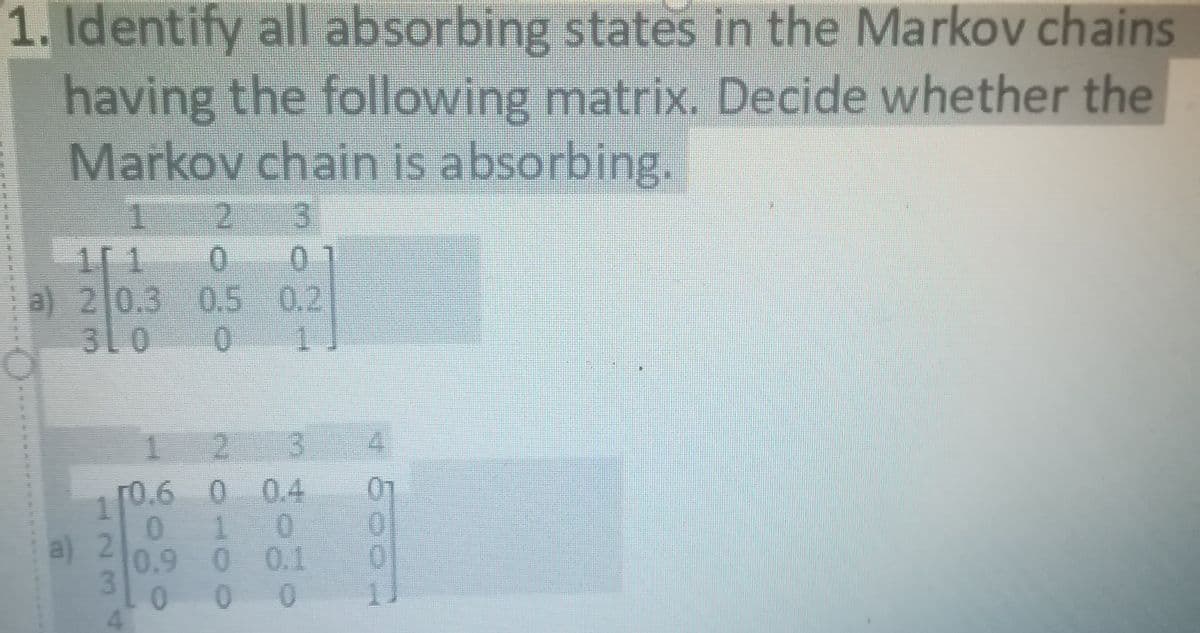

L. Identify all absorbing states in the Markov chains having the following matrix. Decide whether the Markov chain is absorbing.

Q: Can a Markov chain in general have an infinite number of states? O yes no Previous

A: A Markov chain is a stochastic model which describes a sequence of possible events where the…

Q: At Community College, 10% of all business majors switched to another major the next semester, while…

A:

Q: Consider the following Markov Chain. Determine the probability of landing in state 3. (Use 3 sf, in…

A: Solution

Q: Consider the Markov chain represented by the matrix

A: Given information: In the given Markov model, there are 3 states. A state transition matrix consists…

Q: Which of the Markov chains represented by the following transition matrices are regular [1/2 1/2] P…

A: Transition matrix is regular if the sum of row elements is 1 then we can say that transition matrix…

Q: If the student attends class on a certain Friday, then he is three times as likely to be absent the…

A: Let Si, i=1,2 denote the state i, where state 1 is Attends Class and state 2 is Absent from class.…

Q: A state vectorX for a three-state Markov chain is such that the system is as likely to be in state 3…

A: The specified ratio is 4:1:1 and the sum (probabilities) has to be 1.

Q: Let X, be a continuous-time Markov chain with state space {1,2} and rates a(1, 2) = 1,

A: From the given information, Xt is a continuous-time Markov chain with state space {1, 2}.

Q: Consider a Markov chain with two states 1, 2. Suppose that P1,2 = a, P2,1 = b. For which values of a…

A:

Q: Suppose a Markov Chain has transition matrix 0 % 0 % If the system starts in state 1, what is the…

A:

Q: If a system represented by the following Markov Chain starts in state C. what is the probability…

A: As per given by the question, there are given of Markov chain states and what is the probability…

Q: Consider the Markov chain with three states, S={1,2,3}, that has the following transition matrix P=…

A: We have given that the Markov chain with three states , S = { 1, 2, 3 }. Also,P(X1=1) = P(X1=2) =…

Q: A professor either walks or drives to a university. He never drives two days in a row, but if he…

A: If professor walks today, then he is almost sure to walk the next day too. Thus, probability of this…

Q: If the student attends class on a certain Friday, then he is four times as likely to be absent the…

A: Given Information: If the student attends class on Friday then he is four times as likely to be…

Q: A continuous-time Markov chain (CTMC) has the following Q = (ij) matrix (all rates are…

A: Given, a continuous chain Markov chain as shown belowQ=qij=00412270294627390381230 Given that…

Q: Classify the following recurrent Markov chains as periodic or aperiodic. b) 5 5 4 4 3 2 3 2 d) 5 5)…

A: " Since you have posted a question with multiple sub-parts, we will solve the first three subparts…

Q: A state vector X for a four-state Markov chain is such that the system is four times as likely to be…

A: Here as we know that f(x = 3) *4 = f(x = 4)

Q: 1. Suppose the transition matrix of a Markov chain is 0.7 0.3 0.1 0.5 0.4 0.4 0.6 a. Find p12(2),…

A: We want to find (a) p12(2),p21(2) and p22(2) (b) we want to find the stable vector

Q: Suppose that a Markov chain with 4 states and with transition matrix P is in state 3 on the fifth…

A: Given markov chain has 4 states, P = P11P12P13P14P21P22P23P24P31P32P33P34P41P42P43P44 Pij denotes…

Q: Suppose that a basketball player's success in free-throw shooting can be described with a Markov…

A: Hello! As you have posted more than 3 sub parts, we are answering the first 3 sub-parts. In case…

Q: (a) Give the transition matrix M for the corresponding Markov chain. (b) (Using the online app at…

A:

Q: In Smalltown, 90% of all sunny days are followed by sunny days, and 80% of all cloudy days are…

A:

Q: Suppose that a Markov chain with 4 states and with transition matrix P is in state 4 on the fourth…

A: Given: There are 4 states in a Markov chain Transition matrix = P

Q: A state vector XX for a three-state Markov chain is such that the system is as likely to be in state…

A:

Q: Suppose a Markov Chain has transition matrix % % % % %% %% % % % %

A: From the given information, the transition matrix is, P=18143814181818581838141438141814 In the…

Q: Suppose a Markov Chain has transition matrix 40% % 40 % % If the system starts in state 3, what is…

A: Given transition matrix is 18141438140383814012141414012 Given transition matrix is in the form of…

Q: Classify the following recurrent Markov chains as periodic or aperiodic. b) 4 4 3 2 3 c) d) 5 5 1 4…

A: " Since you have posted a question with multiple sub-parts, we will solve the first three subparts…

Q: Draw a transition diagram that corresponds to the following Markov chain: .98 .80 .95 | a, .02 0. by…

A: From the given information, there are three states: ak, bk, ck. And the transition matrix is,

Q: Which of the following Markov chains best represents the given transition matrix? Choose from the…

A:

Q: A state vector X for a four-state Markov chain is such that the system is three times as likely to…

A:

Q: Suppose that a Markov chain with 4 states and with transition matrix P is in state 4 on the fourth…

A: Given that

Q: The diagrams below show three Markov chains, where arrows indicate a non-zero transition…

A: A markov chain is said to be irreducible if there exists a non zero probability to reach state j…

Q: A wandering ghost travels between four regions of the island where it lives: Toronbo Shores,…

A: 2) Let A denotes Toronbo Shores , B denotes Koholint Prarie , C denotes Tabahi Wasteland and D…

Q: . Suppose that a Markov chain with 3 states and with transition matrix P is in state 2 on the second…

A: Given, P be a transition matrix of a Markov Chain with 3 states, Also, given that Markov Chain is in…

Q: Suppose that a basketball player’s success in free-throw shooting can be described with a Markov…

A: Given : if she misses her first free throw then Probability of missing third and fifth throw =…

Q: A continuous-time Markov chain (CTMC) has three states {1, 2, 3}. The average time the process stays…

A: From the given information, there are 3 states {1, 2, 3}. The average time the process states 1, 2…

Q: An absorbing Markov Chain has 5 states where states #1 and #2 are absorbing states and the following…

A: *Answer:

Q: A continuous-time Markov chain (CTMC) has the following Q = (qij) matrix (all rates are…

A: From the given information, Formula for balanced equation is, Here, S represents the state space.…

Q: Consider the Markov chain with three states,S={1,2,3}, that has the following transition matrix…

A: Given that P(X1=1) =P(X1=2) =1/4, find P(X1=3, X2=2,X3=1)

Q: Suppose that the probability that tomorrow will be a wet day is 0.662 if today is wet and 0.250 if…

A: (a) We know that in the transition matrix P entry pij is the probability of moving from state j to…

Q: A state vector X for a four-state Markov chain is such that the system is four times as likely to be…

A: Let the four states be denoted as a, b, c and d respectively. In a state vector, sum of all the…

Q: Suppose that a Markov chain has the following transition matrix The recurrent states are A₁ A₂ A3 A4…

A: Given a markov chain with transition matrix. We have to find the recurrent states.

Q: A continuous-time Markov chain (CTMC) has the following Q = (qij) matrix (all rates are…

A: According to given transition rate matrix. For the state 3 number of transitions in previous states…

Q: A continuous-time Markov chain (CTMC) has three states (1.2, 3). The average time the process stays…

A: From the given information, there are 3 states {1, 2, 3}. The average time the process states 1, 2…

Q: Suppose that a Markov chain with 3 states and with transition matrix P is in state 3 on the first…

A: The given Markov chain with 3 states x1,x2,x3 The transition matrix P is in the state 3 of first…

Q: A state vector XX for a three-state Markov chain is such that the system is as likely to be in state…

A:

Q: A state vector XX for a three-state Markov chain is such that the system is as likely to be in state…

A:

Q: Consider a Markov chain with two possible states, S = {0, 1}. In particular, suppose that the…

A:

Q: Describe the process of designing the operation of a discrete-time Markov chain?

A: Markov Chains are extremely useful for modelling discrete-time, discrete-space stochastic processes…

Step by step

Solved in 2 steps with 2 images

- Explain how you can determine the steady state matrix X of an absorbing Markov chain by inspection.Suppose that X0, X1, X2, ... form a Markov chain on the state space {1, 2}. Assume that P(X0 = 1) = P(X0 = 2) = 1/2 and that the matrix of transition probabilities for the chain has the following entries: Q11 = 1/2, Q12 = 1/2, Q21 = 1/3, Q22 = 2/3. Find limn→∞ P(Xn = 1).. Suppose that a Markov chain with 3 states and with transition matrix P is in state 2 onthe second observation. Which of the following expressions represents the probabilitythat it will be in state 3 on the third observation? (A) the (2, 3) entry of P 3 (B) the (2, 3) entry of P 2(C) the (3, 3) entry of P 2 (D) the (2, 2) entry of P 3(E) the (2, 3) entry of P (F) the (3, 2) entry of P(G) the (3, 2) entry of P 3 (H) the (3, 2) entry of P 2

- A state vector XX for a three-state Markov chain is such that the system is as likely to be in state 3 as in state 1 and is five times as likely to be in state 2 as in 3. Find the state vector XX.A state vector XX for a three-state Markov chain is such that the system is as likely to be in state 3 as in state 1 and is three times as likely to be in state 2 as in 3. Find the state vector XX.Consider the Markov chain with three states,S={1,2,3}, that has the following transition matrix If we know P(X1=1) =P(X1=2) =1/4, find P(X1=3, X2=2,X3=1)

- A state vector XX for a three-state Markov chain is such that the system is as likely to be in state 3 as in state 1 and is four times as likely to be in state 2 as in 3. Find the state vector XX.If A is a Markov matrix, why doesn't I+ A+ A2 + · · · add up to (I -A)-1?1. A museum consists of six rooms of equal sizes arranged in the form of a grid with three rows and two columns. Each interior wall has a door that connects to adjacent rooms. Guards move through the rooms through the interior doors. Represent the movements of each guard in the museum as a Markov chain, and show that his states are periodic with period t = 2.

- For a Markov matrix, the sum of the components of x equals the sum of the components of Ax. If Ax = AX with,\ cf= 1, prove that the components of this non-steady eigenvector x add to zero.Consider the Markov chain having a three state space, namely {E0,E1,E2}{E0,E1,E2} and transition matrix PP, 3-state Markov chain P E_0 E_1 E_2 E_001/21/2E_11/43/40E_21/201/2 Compute p(2)10p10(2) Select one: a. 0.0250.025 b. 3/83/8 c. 00 d. (0.5)2For the attached transition probability matrix for a Markov chain with {Xn ; n = 0, 1, 2,.........}: a) How many classes exist, and which two states are the absorption states? b) What is the limn->inf P{Xn = 3 | X0 = 3}? b) What is the limn->inf P{Xn = 1 | X0 = 3}?