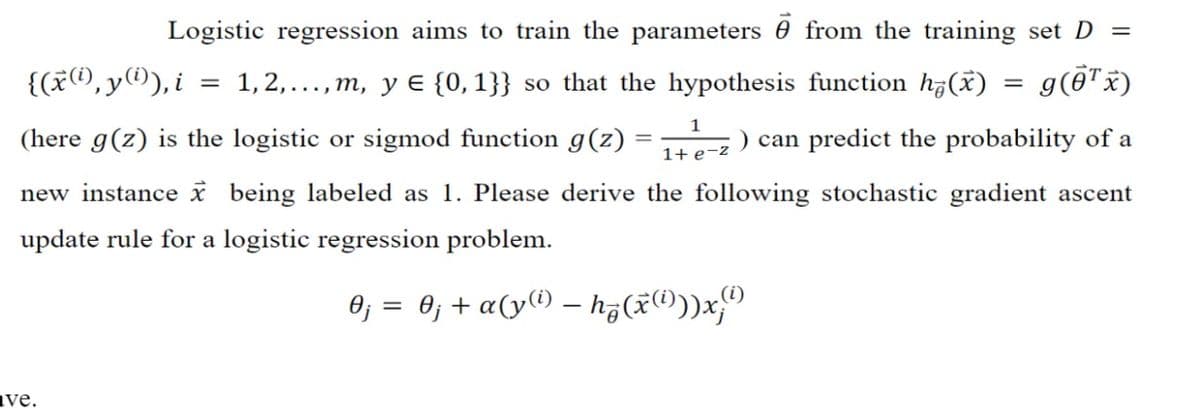

Logistic regression aims to train the parameters from the training set D = {(x(i),y(i)), i 1, 2,..., m, y € {0,1}} so that the hypothesis function h(x) = g(¹ x) = (here g(z) is the logistic or sigmod function g(z) : = ) can predict the probability of a 1 1+ e-z new instance x being labeled as 1. Please derive the following stochastic gradient ascent update rule for a logistic regression problem. 0₁ = 0; + α(y(¹) — hq (x(i)))x;") -

Logistic regression aims to train the parameters from the training set D = {(x(i),y(i)), i 1, 2,..., m, y € {0,1}} so that the hypothesis function h(x) = g(¹ x) = (here g(z) is the logistic or sigmod function g(z) : = ) can predict the probability of a 1 1+ e-z new instance x being labeled as 1. Please derive the following stochastic gradient ascent update rule for a logistic regression problem. 0₁ = 0; + α(y(¹) — hq (x(i)))x;") -

Operations Research : Applications and Algorithms

4th Edition

ISBN:9780534380588

Author:Wayne L. Winston

Publisher:Wayne L. Winston

Chapter17: Markov Chains

Section17.6: Absorbing Chains

Problem 7P

Related questions

Question

Transcribed Image Text:Logistic regression aims to train the parameters from the training set D =

{(x(i),y(i)), i

1,2,...,m, y ¤ {0,1}} so that the hypothesis function h(x)

=

g(0¹ x)

1

(here g(z) is the logistic or sigmod function g(z)

can predict the probability of a

1+ e-z

new instance x being labeled as 1. Please derive the following stochastic gradient ascent

update rule for a logistic regression problem.

0j = 0j + a(y(¹) — hz(x)))x;

ave.

=

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

Step by step

Solved in 3 steps

Knowledge Booster

Learn more about

Need a deep-dive on the concept behind this application? Look no further. Learn more about this topic, computer-science and related others by exploring similar questions and additional content below.Recommended textbooks for you

Operations Research : Applications and Algorithms

Computer Science

ISBN:

9780534380588

Author:

Wayne L. Winston

Publisher:

Brooks Cole

Operations Research : Applications and Algorithms

Computer Science

ISBN:

9780534380588

Author:

Wayne L. Winston

Publisher:

Brooks Cole