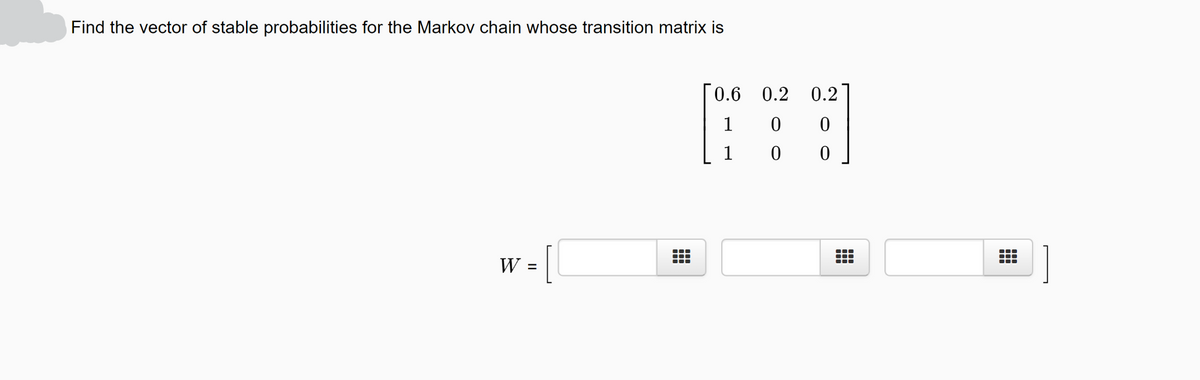

Find the vector of stable probabilities for the Markov

Q: Suppose that the model pctstck = Bo + Bfunds + Bzrisktol + u satisfies the first four Gauss-Markov…

A:

Q: Suppose you toss a six-sided die repeatedly until the product of the last two outcomes is equal to…

A: Let the expected number of tosses required to get a product of last 2 numbers as 12 be X her. 12 =…

Q: Derive the likelihood function of a causal and invertible AR(p) Gaussian process.

A: Please find your answer below:

Q: Suppose the transition matrix for a Markov chain is given by [! ! 11

A: Given information: In the given Markov model, there are 3 states. A state transition matrix consists…

Q: Consider the Markov chain represented by the matrix

A: Given information: In the given Markov model, there are 3 states. A state transition matrix consists…

Q: In a gambling game, a player has 4 $. He loses 2 $ with 0.30 odds while he gains 1 $ with 0.70 odds…

A:

Q: Let X be a Poisson(X) random variable. By applying Markov's inequality to the random variable W =…

A:

Q: Suppose the city of Metropolis is experiencing a movement of its population to the suburbs. Each…

A: Given: 25% of the people that live in the city move to the suburbs. 5% of the people that live in…

Q: Let {X„} be a time homogeneous Markov Chain with sample space {1,2, 3, 4} and transition matrix P =…

A: In question, We have given a Transition probability matrix of a Markov chain. Then we'll find the…

Q: Find the vector of stable probabilities for the Markov chain whose transition matrix is 0.2 0,4 0.4

A: Given,

Q: Suppose a government study estimates that the proability of successive generations of a rural family…

A:

Q: Assume that the probability of rain tomorrow is 0.5 if it is raining today, and assume that the…

A: a A stochastic process has the Markov property if the probability of future states of the process…

Q: Suppose that a group of robots is traversing this maze. At each step, each robot will choose a path…

A: First, construct the state transition diagram and then find the transition matrix. For the Markov…

Q: Do the following Markov chains converge to

A: From the given information, P=010000011000130230 Here, the states are 1, 2, 3, 4. Consider, the…

Q: Find the vector of stable probabilities for the Markov chain whose transition matrix is:

A: Given information: In the given Markov model, there are 3 states. A state transition matrix consists…

Q: uppose you have a hidden Markov

A: Given : A hidden Markov model To find : The most factored form

Q: What are the Guass-Markov assumptions? What problems would happen if a gression model does not meet…

A:

Q: 2. For a irreducible Markov chain with a stationary distribution 7, show that all the states are…

A:

Q: Find the stable vector of 1 1 1 P = 2 2 3 L 4 4 Note that although this Markov chain may not be…

A:

Q: Assume that the probability of rain tomorrow is 0.5 if it is raining today, and assume that the…

A:

Q: Find the vector of stable probabilities for the Markov chain whose transition m

A: answer is in next step

Q: GIve Proof of the Gauss–Markov Theorem for Multiple Regression?

A:

Q: Suppose a Markov Chain has transition matrix % % % % %% %% % % % %

A: From the given information, the transition matrix is, P=18143814181818581838141438141814 In the…

Q: Suppose we flip a fair coin 50 times. What upper bound does Markov's Theorem give for the…

A: Let X denote the number of heads on flipping a coin 50 times. Let p be the probability of getting…

Q: Find the vector of stable probabilities for the Markov chain whose transition matrix is

A: For a steady-state vector W of stable probabilities and a transition matrix P , WP=WW=steady state…

Q: Describe each of the five “Gauss Markov” assumptions, (define them) and explain in the context of…

A: In statistics, the Gauss Markov theorem states thatvthe ordinary least squares estimator has the…

Q: A company has two machines. During any day, each machine that is working at the beginning of the day…

A: A transition probability matrix is to be formulated for the given problem, with the number of…

Q: how do I use markov's and chepyshev's inequalities to obtain bounds for the probability of having an…

A: Using Markov's Inequality: According to Markov's Inequality, if X is a non-negative random variable…

Q: What is the stable vector of this Markov chain?

A: The given matrix is: P=1001201214340 The formula for the stable vector is : PX=X…

Q: A linear Markovian death process initialized at 8 members experiences an average weekly death rate p…

A: Members = 8 Death rate = 0.3 The answer is given using the concept of expectation.

Q: A Markov Chain has the transition matrix P = 1 and currently has state vector % % |. What is the…

A: From the given information, P=121201Let π=1656 Consider, the probability vector at stage 1 is,…

Q: Find the vector of stable probabilities for the Markov chain whose transition matrix is

A: Let the stable vector of probabilities be; W=xyzwhere;x+y+z=1 Let; P=01000.60.4100

Q: Determine the 3-step stohastic matrix of the Markov chain! Deter mine the distributionn of the…

A: a) From the given transition diagram, there are 3 states 0, 1, 2 and the transition matrix is,…

Q: (Note: Express your answers as decimal fractions rounded to 4 decimal places (if they have more than…

A: We have been given the transition probability matrix (TPM) as, P=0.70.30.20.8 Let the vector W be…

Q: A Markov Chain has the transition matrix P = and currently has state vector % %). What is the…

A: From the given information, Consider, the probability vector at stage 1 is,

Q: Find the equilibrium distribution of the Markov chain above

A: State 1 2 3 4 1 0 0.9 0.1 0 2 0.8 0.1 0 0.1 3 0 0.5 0.3 0.2 4 0.1 0 0 0.9 Transition…

Q: A Markov chain has transition matrix 글 0 글 3 Given the initial probabilities ø1 = $2 = $3 = , find…

A: Given the transition matrix of the Markov chain is P=1216131201234140 The initial probabilities…

Q: Find the vector WW of stable probabilities for the Markov chain whose transition matrix appears…

A: The probabilities of the Markov chain is P=0.70.30.80.2

Q: In a binomial model, give an example of a stochastic process that is both a martingale and Markov.

A: BINOMIAL DISTRIBUTION: The binomial distribution is a probability distribution…

Q: 5. Explain what is meant by BLUE estimates and the Gauss-Markov theorem. Mathematic relevant proofs…

A: The Gauss Markov theorem tells us that if a certain set of assumptions are met, the ordinary least…

Q: Show that if X,, X,... is a Markov chain, then it is sta second-order probability masses: P{X, = X.…

A: Markov chains are used for the study of temporal and sequence data to interpret the dependencies and…

Q: A coffee shop has two coffee machines, and only one coffee machine is in operation at any given…

A: Given that At a given time only one machine is in operation. If machine 1 is working, machine 2…

Q: If the initial state probability ofa Markov chain is P = () and the tpm of the %3D chain is the…

A: The initial state probability is given as, P0=56,16 Also the Transition Probability Matrix (TPM) is…

Q: A video cassette recorder manufacturer is so certain of its quality control that it is offering a…

A: The Markov chain for the given problem can be modeled with 4 states depicting the year after…

Q: Define Markov matrix.

A: A n×n matrix is called Markov Matrix if all entries are non-negative and the sum of each column…

Q: Give an example of one-step transition probabilities for a renewal Markov chain that is null…

A: Given :One-step transition probabilities for a renewal Markov chainthat is null recurrent.

Q: Find the stable vector of 1 P 3 4 Note that although this Markov chain may not be regular, the…

A: Given information: P=1001212014034 The stable vector is the probability row vector such that: w·P=w…

Q: Suppose that in any given period an unemployed person will find a job with probability 0.7 and will…

A: Given information: The probabilities of employment and unemployment are given.

Step by step

Solved in 2 steps

- Explain how you can determine the steady state matrix X of an absorbing Markov chain by inspection.12. Robots have been programmed to traverse the maze shown in Figure 3.28 and at each junction randomly choose which way to go. Figure 3.28 (a) Construct the transition matrix for the Markov chain that models this situation. (b) Suppose we start with 15 robots at each junction. Find the steady state distribution of robots. (Assume that it takes each robot the same amount of time to travel between two adjacent junctions.)