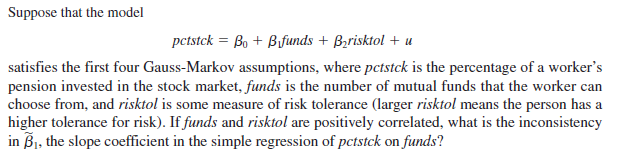

Suppose that the model pctstck = Bo + Bfunds + Bzrisktol + u satisfies the first four Gauss-Markov assumptions, where pctstck is the percentage of a worker's pension invested in the stock market, funds is the number of mutual funds that the worker can choose from, and risktol is some measure of risk tolerance (larger risktol means the person has a higher tolerance for risk). If funds and risktol are positively correlated, what is the inconsistency in Bj, the slope coefficient in the simple regression of pctstck on funds?

Suppose that the model pctstck = Bo + Bfunds + Bzrisktol + u satisfies the first four Gauss-Markov assumptions, where pctstck is the percentage of a worker's pension invested in the stock market, funds is the number of mutual funds that the worker can choose from, and risktol is some measure of risk tolerance (larger risktol means the person has a higher tolerance for risk). If funds and risktol are positively correlated, what is the inconsistency in Bj, the slope coefficient in the simple regression of pctstck on funds?

Linear Algebra: A Modern Introduction

4th Edition

ISBN:9781285463247

Author:David Poole

Publisher:David Poole

Chapter3: Matrices

Section3.7: Applications

Problem 14EQ

Related questions

Question

100%

Transcribed Image Text:Suppose that the model

pctstck = Bo + Bfunds + Bzrisktol + u

satisfies the first four Gauss-Markov assumptions, where pctstck is the percentage of a worker's

pension invested in the stock market, funds is the number of mutual funds that the worker can

choose from, and risktol is some measure of risk tolerance (larger risktol means the person has a

higher tolerance for risk). If funds and risktol are positively correlated, what is the inconsistency

in Bj, the slope coefficient in the simple regression of pctstck on funds?

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

This is a popular solution!

Trending now

This is a popular solution!

Step by step

Solved in 3 steps with 3 images

Recommended textbooks for you

Linear Algebra: A Modern Introduction

Algebra

ISBN:

9781285463247

Author:

David Poole

Publisher:

Cengage Learning

Elementary Linear Algebra (MindTap Course List)

Algebra

ISBN:

9781305658004

Author:

Ron Larson

Publisher:

Cengage Learning

Linear Algebra: A Modern Introduction

Algebra

ISBN:

9781285463247

Author:

David Poole

Publisher:

Cengage Learning

Elementary Linear Algebra (MindTap Course List)

Algebra

ISBN:

9781305658004

Author:

Ron Larson

Publisher:

Cengage Learning