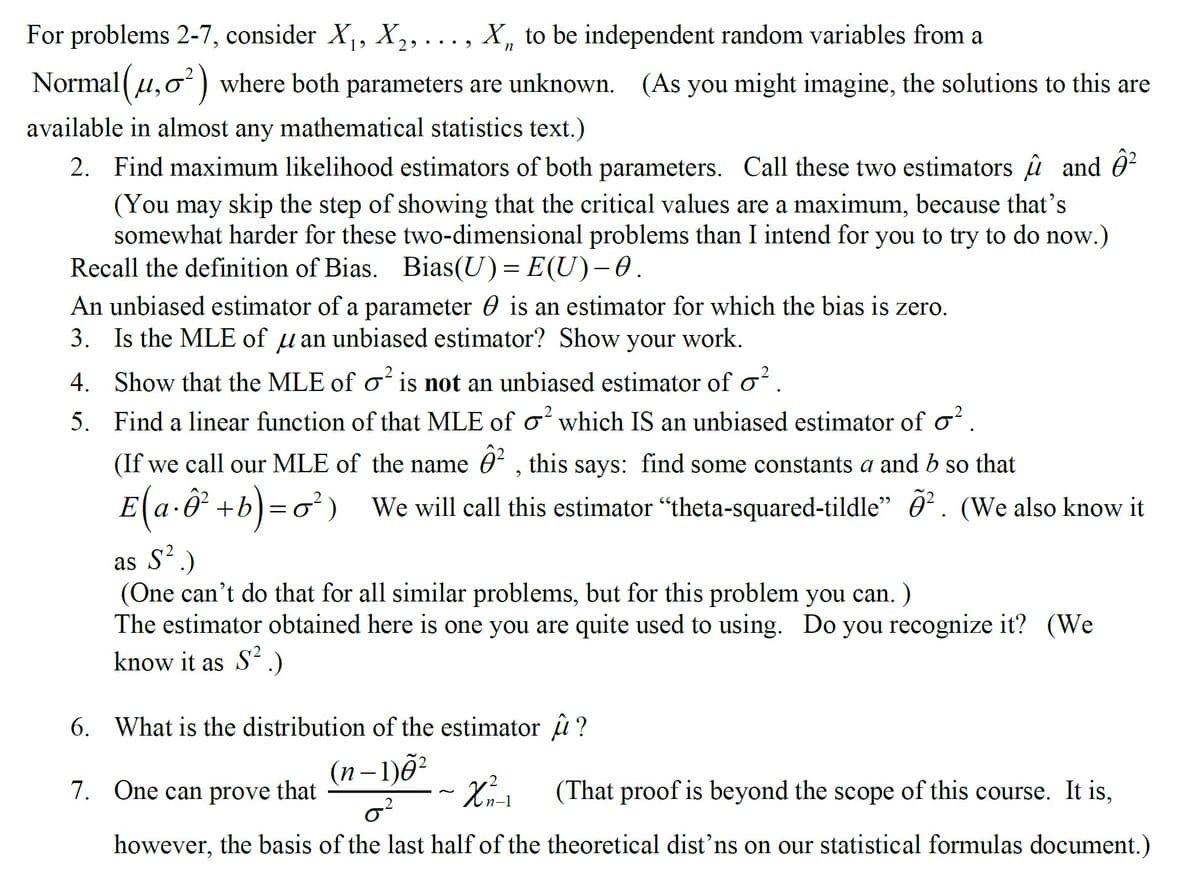

For problems 2-7, consider X, X,, ..., X, to be independent random variables from a Normal (u,o) where both parameters are unknown. (As you might imagine, the solutions to this are available in almost any mathematical statistics text.) 2. Find maximum likelihood estimators of both parameters. Call these two estimators u and 0? (You may skip the step of showing that the critical values are a maximum, because that's somewhat harder for these two-dimensional problems than I intend for you to try to do now.) Recall the definition of Bias. Bias(U) = E(U)–0. An unbiased estimator of a parameter 0 is an estimator for which the bias is zero. 3. Is the MLE of u an unbiased estimator? Show your work. 4. Show that the MLE of o is not an unbiased estimator of o?. 5. Find a linear function of that MLE of o? which IS an unbiased estimator of o?. (If we call our MLE of the name 0 , this says: find some constants a and b so that E(a-ô +b)=o² ) We will call this estimator “theta-squared-tildle" ở. (We also know it as S.) (One can't do that for all similar problems, but for this problem you can. ) The estimator obtained here is one you are quite used to using. Do you recognize it? (We know it as S.) 6. What is the distribution of the estimator u ? (n–1)ð? 7. One can prove that (That proof is beyond the scope of this course. It is, however, the basis of the last half of the theoretical dist'ns on our statistical formulas document.)

For problems 2-7, consider X, X,, ..., X, to be independent random variables from a Normal (u,o) where both parameters are unknown. (As you might imagine, the solutions to this are available in almost any mathematical statistics text.) 2. Find maximum likelihood estimators of both parameters. Call these two estimators u and 0? (You may skip the step of showing that the critical values are a maximum, because that's somewhat harder for these two-dimensional problems than I intend for you to try to do now.) Recall the definition of Bias. Bias(U) = E(U)–0. An unbiased estimator of a parameter 0 is an estimator for which the bias is zero. 3. Is the MLE of u an unbiased estimator? Show your work. 4. Show that the MLE of o is not an unbiased estimator of o?. 5. Find a linear function of that MLE of o? which IS an unbiased estimator of o?. (If we call our MLE of the name 0 , this says: find some constants a and b so that E(a-ô +b)=o² ) We will call this estimator “theta-squared-tildle" ở. (We also know it as S.) (One can't do that for all similar problems, but for this problem you can. ) The estimator obtained here is one you are quite used to using. Do you recognize it? (We know it as S.) 6. What is the distribution of the estimator u ? (n–1)ð? 7. One can prove that (That proof is beyond the scope of this course. It is, however, the basis of the last half of the theoretical dist'ns on our statistical formulas document.)

MATLAB: An Introduction with Applications

6th Edition

ISBN:9781119256830

Author:Amos Gilat

Publisher:Amos Gilat

Chapter1: Starting With Matlab

Section: Chapter Questions

Problem 1P

Related questions

Question

Consider X₁, X₂, . . . , Xn to be independent random variables from a Normal(μ,σ ² ) where both parameters are unknown.

Transcribed Image Text:For problems 2-7, consider X, X,, ..., X, to be independent random variables from a

19

Normal (u,0 ) where both parameters are unknown. (As you might imagine, the solutions to this are

available in almost any mathematical statistics text.)

2. Find maximum likelihood estimators of both parameters. Call these two estimators u and 0?

(You may skip the step of showing that the critical values are a maximum, because that's

somewhat harder for these two-dimensional problems than I intend for you to try to do now.)

Recall the definition of Bias. Bias(U)= E(U)-0.

An unbiased estimator of a parameter 0 is an estimator for which the bias is zero.

3. Is the MLE of u an unbiased estimator? Show your work.

4. Show that the MLE of o is not an unbiased estimator of o?

5. Find a lincar function of that MLE of o which IS an unbiased estimator of o

(If we call our MLE of the name 0 , this says: find some constants a and b so that

E a-O +b)=o) We will call this estimator "theta-squared-tildle" ô² . (We also know it

as S'.)

(One can't do that for all similar problems, but for this problem you can. )

The estimator obtained here is one you are quite used to using. Do you recognize it? (We

know it as S'.)

6. What is the distribution of the estimator û ?

(n– 1)õ²

7. One can prove

that

(That proof is beyond the scope of this course. It is,

~

n-1

however, the basis of the last half of the theoretical dist'ns on our statistical formulas document.)

Expert Solution

This question has been solved!

Explore an expertly crafted, step-by-step solution for a thorough understanding of key concepts.

This is a popular solution!

Trending now

This is a popular solution!

Step by step

Solved in 7 steps

Recommended textbooks for you

MATLAB: An Introduction with Applications

Statistics

ISBN:

9781119256830

Author:

Amos Gilat

Publisher:

John Wiley & Sons Inc

Probability and Statistics for Engineering and th…

Statistics

ISBN:

9781305251809

Author:

Jay L. Devore

Publisher:

Cengage Learning

Statistics for The Behavioral Sciences (MindTap C…

Statistics

ISBN:

9781305504912

Author:

Frederick J Gravetter, Larry B. Wallnau

Publisher:

Cengage Learning

MATLAB: An Introduction with Applications

Statistics

ISBN:

9781119256830

Author:

Amos Gilat

Publisher:

John Wiley & Sons Inc

Probability and Statistics for Engineering and th…

Statistics

ISBN:

9781305251809

Author:

Jay L. Devore

Publisher:

Cengage Learning

Statistics for The Behavioral Sciences (MindTap C…

Statistics

ISBN:

9781305504912

Author:

Frederick J Gravetter, Larry B. Wallnau

Publisher:

Cengage Learning

Elementary Statistics: Picturing the World (7th E…

Statistics

ISBN:

9780134683416

Author:

Ron Larson, Betsy Farber

Publisher:

PEARSON

The Basic Practice of Statistics

Statistics

ISBN:

9781319042578

Author:

David S. Moore, William I. Notz, Michael A. Fligner

Publisher:

W. H. Freeman

Introduction to the Practice of Statistics

Statistics

ISBN:

9781319013387

Author:

David S. Moore, George P. McCabe, Bruce A. Craig

Publisher:

W. H. Freeman